Wow! Talk about a slow go. Really did not expect to have this many posts for this project. But, perhaps it demanded them.

Time to get to some serious training. Though I will initially play around a little. I am thinking I will train for about 5 epochs (something like 10-12 minutes execution time). I want to look at what happens if I stop the extra critic training iterations after 2 or 3 epochs. Is the critic in risk of being overrun by the generator after that point or not.

If it appears to be losing out, I will up the number of training epochs and the number of epochs of extra critic training and see what happens.

I am trying to zero in on a reasonable point to stop the extra training for the critic before I commit to a lengthy training run—likely a couple of hours or so.

I also want to look at the resources being used while training the model: cpu and gpu, processing cycles and memory. Though don’t know how easy it will be to capture that information. More research to be done.

Full Training Loop

Well, it looks like we have finally arrived at attempting to achieve the goal of this project, train the GAN to generate people’s faces with and without glasses. I expect the training loop will have a lot in common with the testing block from the last post. Well, in fact pretty much just duplicated.

For development and testing things I will start with just 5 epochs of training. And as I mentioned above, I will stop the extra training of the critic after 3 epochs of traning. Not sure using global variables is the best choice, but…

Without further ado here’s the code for that loop.

# for epoch in range(trn_len):

# during dev start with 5 epochs

trn_len = 5

c_nbr_epochs = 3

tot_tm = 0

for epoch in range(trn_len):

st_tm = time.perf_counter()

cl_tot = 0

gl_tot = 0

if epoch >= c_nbr_epochs:

c_g_ratio = 1

for _, i_lbls, one_hots, imgs_n_lbls in img_ldr:

if do_dbg:

print(f"\none_hots: {one_hots.shape}, imgs_n_lbls: {imgs_n_lbls.shape}")

# exit(0)

l_crit, l_gen = train_batch(one_hots, imgs_n_lbls, epoch + 1)

cl_tot += l_crit

gl_tot += l_gen

print(f"epoch {epoch + 1}: critic loss: {cl_tot}, generator loss: {gl_tot}")

plot_tst_gen(epoch + 1, i_show=False)

tr_tm = time.perf_counter() - st_tm

print(f"\ttime to run epoch {epoch + 1} (while testing code): {tr_tm}")

tot_tm += tr_tm

print(f"Total training time for {trn_len} epochs: {tot_tm}")

First Test

Okay, 5 epochs, no extra critic training after 3 epochs. Things certainly go faster for the last two epochs.

(mclp-3.12) PS F:\learn\mcl_pytorch\chap5> python wgan-gp_g_ng.py

models loaded to gpu, dataloader instantiated with modified dataset, testing training code

epoch 1: critic loss: -10107.708984375, generator loss: 10740.4365234375

time to run epoch 1 (while testing code): 152.42306400003145

epoch 2: critic loss: -15070.3876953125, generator loss: 16900.056640625

time to run epoch 2 (while testing code): 150.6800768999965

epoch 3: critic loss: -13137.2626953125, generator loss: 18081.498046875

time to run epoch 3 (while testing code): 151.62145969999256

epoch 4: critic loss: -5690.22900390625, generator loss: 17074.76171875

time to run epoch 4 (while testing code): 39.163172999979

epoch 5: critic loss: -3575.84375, generator loss: 16003.017578125

time to run epoch 5 (while testing code): 39.19414209999377

Total training time for 5 epochs: 533.0819156999933

During the full training session:

- CPU @ ~55% utilizaton

- main memory use @ ~24 GB

- GPU @ 40-50% utilizaton (~65°C)

- GPU memory @ 4 GB

Am a little surprised by the CPU utilization. As for the main memory, all the images combined with their labels are loaded into memory before training starts. Trade off between memory and training time (including CPU utilization). Wonder if those could be loaded to the GPU and perhaps speed things up? (Note, added 2024.09.07: not possible, GPU doesn’t have enough memory.)

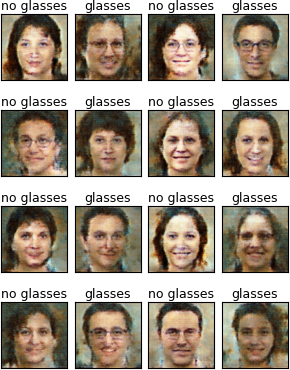

Generator Images After 5th Epoch of Training

Decent progress in look of images; but, certainly not getting the correct images for the labels given.

Second Test

Let’s try 10 epochs staying with first 3 for extra critic training.

(mclp-3.12) PS F:\learn\mcl_pytorch\chap5> python wgan-gp_g_ng.py

models loaded to gpu, dataloader instantiated with modified dataset, testing training code

epoch 1: critic loss: -10308.259765625, generator loss: 10823.6513671875

time to run epoch 1 (while testing code): 151.68628510000417

epoch 2: critic loss: -14926.7607421875, generator loss: 16581.197265625

time to run epoch 2 (while testing code): 149.73669359995984

epoch 3: critic loss: -13229.71484375, generator loss: 18414.29296875

time to run epoch 3 (while testing code): 150.0749216999975

epoch 4: critic loss: -5635.57177734375, generator loss: 17367.751953125

time to run epoch 4 (while testing code): 38.982497600023635

epoch 5: critic loss: -3448.558837890625, generator loss: 15934.58984375

time to run epoch 5 (while testing code): 39.04420140001457

epoch 6: critic loss: -2666.718994140625, generator loss: 16028.0048828125

time to run epoch 6 (while testing code): 38.85639790003188

epoch 7: critic loss: -2084.23779296875, generator loss: 16154.03125

time to run epoch 7 (while testing code): 38.95157299999846

epoch 8: critic loss: -1696.235107421875, generator loss: 15966.8193359375

time to run epoch 8 (while testing code): 38.97428060002858

epoch 9: critic loss: -1481.40576171875, generator loss: 15503.4736328125

time to run epoch 9 (while testing code): 38.81838459998835

epoch 10: critic loss: -1331.57373046875, generator loss: 15656.3642578125

time to run epoch 10 (while testing code): 38.97995120001724

Total training time for 10 epochs: 724.1051867000642

CPU, memory and GPU utilization similar to the above. Though CPU stayed closer to 50%.

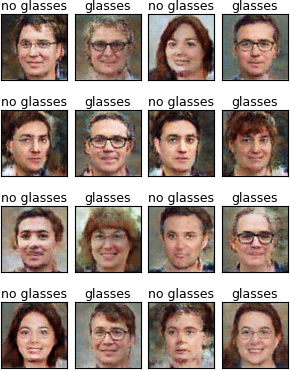

Generator Images After 10th Epoch of Training

Images generally looking better. Fewer errors matching images to labels.

Third Test

Okay, let’s go for 30 epochs. I will again stop the extra critic training after 3 epochs.

(mclp-3.12) PS F:\learn\mcl_pytorch\chap5> python wgan-gp_g_ng.py

models loaded to gpu, dataloader instantiated with modified dataset, testing training code

epoch 1: critic loss: -10282.5380859375, generator loss: 10785.779296875

time to run epoch 1 (while testing code): 151.81591220002156

epoch 2: critic loss: -14899.2685546875, generator loss: 16507.8671875

time to run epoch 2 (while testing code): 149.75620250002248

epoch 3: critic loss: -13045.1083984375, generator loss: 18222.216796875

time to run epoch 3 (while testing code): 149.946628900012

epoch 4: critic loss: -5710.92626953125, generator loss: 17029.74609375

time to run epoch 4 (while testing code): 38.85917850001715

epoch 5: critic loss: -3639.538818359375, generator loss: 15739.783203125

time to run epoch 5 (while testing code): 38.82636200002162

... ...

epoch 26: critic loss: -822.31787109375, generator loss: 14581.0283203125

time to run epoch 26 (while testing code): 38.83012060000328

epoch 27: critic loss: -840.53759765625, generator loss: 14375.5341796875

time to run epoch 27 (while testing code): 38.66069160000188

epoch 28: critic loss: -810.6693115234375, generator loss: 14383.8955078125

time to run epoch 28 (while testing code): 38.81977420003386

epoch 29: critic loss: -834.22412109375, generator loss: 14362.1240234375

time to run epoch 29 (while testing code): 38.82359179999912

epoch 30: critic loss: -823.3651123046875, generator loss: 14330.6298828125

time to run epoch 30 (while testing code): 38.69574639998609

Total training time for 30 epochs: 1499.3151571000344

CPU, memory and GPU utilization similar to those of the second test. And, following the 18th epoch of training, the images matched the requested labels.

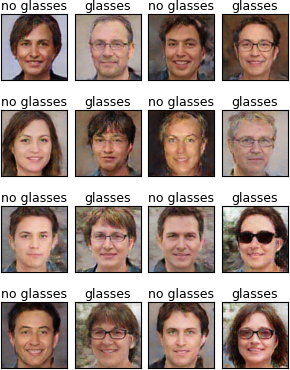

Generator Images After 30th Epoch of Training

Images again looking better and images match the requested labels.

Train Model

Okay let’s do a full training run. Going to take fair bit longer to run. I am going to give the critic extra training for 5 epochs. The whole training session will be for 50 epochs. Let’s see who gives up first: me or the beast.

(mclp-3.12) PS F:\learn\mcl_pytorch\chap5> python wgan-gp_g_ng.py

epoch 1: critic loss: -10215.3623046875, generator loss: 10758.4296875

epoch 2: critic loss: -14913.734375, generator loss: 16712.4453125

epoch 3: critic loss: -13072.7373046875, generator loss: 17980.515625

epoch 4: critic loss: -12580.5341796875, generator loss: 19806.53125

epoch 5: critic loss: -12765.3525390625, generator loss: 22481.18359375

... ...

epoch 46: critic loss: -1078.3282470703125, generator loss: 18635.6875

epoch 47: critic loss: -1110.264404296875, generator loss: 18608.93359375

epoch 48: critic loss: -1109.6563720703125, generator loss: 18483.5703125

epoch 49: critic loss: -1140.3804931640625, generator loss: 18506.912109375

epoch 50: critic loss: -1149.96240234375, generator loss: 18485.484375

Total training time for 50 epochs: 2507.764040100097

Bit of time that, ~40 minutes. But, to me, looks like a significant improvement in the look of the faces. Though the images generated following the last epoch of training had an image that didn’t match the requested label. The images generated following the previous epoch, 49th, didn’t have that problem. So that’s the one I am going to show.

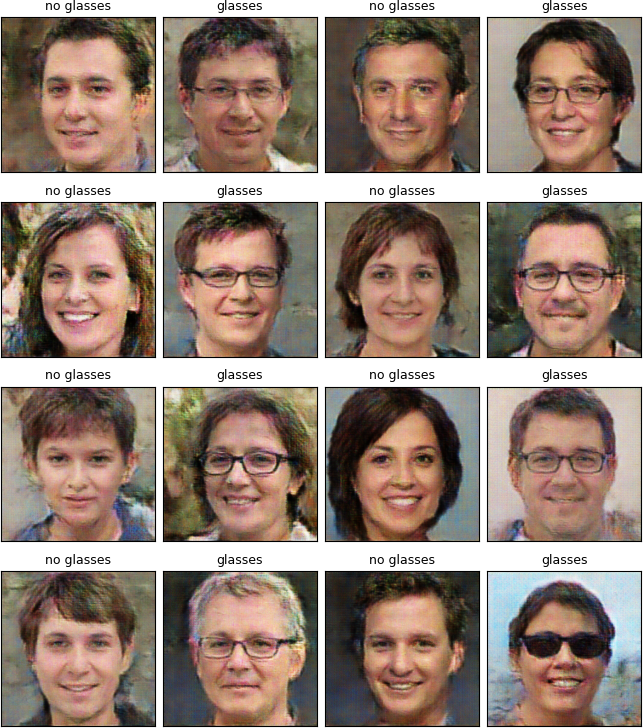

Generator Images After 49th Epoch of Training

with 5 epochs of extra critic training

Seems to me that the images are more realistic. And for the most part the generator produces the correct image for the given label. What do you think?

Load Saved Model and Generate Images

Okay, let’s use the trained model to generate a set of images or two.

For now I am going to load the torchscript version of the model, as I don’t expect to do any additional training or modify the model code in anyway.

Pretty simple bit of code given previous efforts.

... ...

elif tst_model:

trn_len = 50

c_nbr_epochs = 5

gen_pth = Path(f"./wgan_sv/gen_wgan-wp_g_ng_script_32_{trn_len}_{c_nbr_epochs}.pt")

genr = torch.jit.load(gen_pth)

genr.eval()

for tst in range(1,3):

plot_tst_gen(f"t{tst}", i_show=False)

I will mention that when I add some new code using the image generation functions, I often get a bug that needs resolving. Almost always related to not sorting out tensors and arrays/lists correctly. I apparently have not been learning as well as I would like.

Here’s a sample of two images generated by the above bit of code.

Generator Images After Loading Saved Network from File

I modified the image function slightly to produce larger images so we could get a better look at the generator’s efforts.

With these larger images, one can definitely see issues with the faces and such. But, I am not at the moment prepared to train the model for an hour or two. We will see what the future brings.

But all in all, I would say for 50 epochs, a little over 40 minutes, of training, the generator is doing a pretty good job.

Done

I think that is it for this one. A post pretty focused on a single topic—most unusual for me.

The next post we will play around with generating series of morphing images. I.E. transition a face with glasses to one without. Perhaps a male face with glasses to a female one. The latter is apparently possible, but at the moment I am not too sure how to get there. The former I believe will be much easier.

Until then may your WGAN-GP hum along as nicely as my current attempt.